TrackingExpert+

In my sophomore year of college going into my junior year, I'd taken up a job as an undergraduate assistant in Iowa State University's Virtual Reality Applications Center, where I worked under professor Rafael Radkowski on a number of applications involving Computer Vision with live pointclouds. One of these applications is an open-source program called TrackingExpert+, which was meant to estimate the pose of a real object in front of an RGB-D camera by comparing a pointcloud representation of the object against the depth feed of the camera. My role was to develop utility functions and a demo application for this program.

Throughout the process, I'd learned the fundamentals of linear algebra and how it relates to three-dimensional graphics and geometry. I'd learned the basics of how to build projects and libraries through the use of CMake and received some much-needed hands-on experience with Git in a professional setting. I applied all of this knowledge to my existing classroom experience with C++ and C from the classroom and was able to contribute a significant amount to the repository.

Unfortunately, since I transferred to my job at DMI, the project seems to have been halted. However, it did get me interested in graphics technology and HCI. In particular, it got me started on a long career trajectory in the way of pointcloud interaction. My experience in this role heavily swayed what I want to do as a computer engineer and is highly representative of what kinds of technologies I see myself developing in my professional career, and I would be more than happy to explore topics of HCI, computer graphics, and pointcloud interaction in more depth by applying my experience to more settings.

You can find the TrackingExpert+ repository here: github.com/rafael-radkowski/TrackingExpertPlus

Throughout the process, I'd learned the fundamentals of linear algebra and how it relates to three-dimensional graphics and geometry. I'd learned the basics of how to build projects and libraries through the use of CMake and received some much-needed hands-on experience with Git in a professional setting. I applied all of this knowledge to my existing classroom experience with C++ and C from the classroom and was able to contribute a significant amount to the repository.

Unfortunately, since I transferred to my job at DMI, the project seems to have been halted. However, it did get me interested in graphics technology and HCI. In particular, it got me started on a long career trajectory in the way of pointcloud interaction. My experience in this role heavily swayed what I want to do as a computer engineer and is highly representative of what kinds of technologies I see myself developing in my professional career, and I would be more than happy to explore topics of HCI, computer graphics, and pointcloud interaction in more depth by applying my experience to more settings.

You can find the TrackingExpert+ repository here: github.com/rafael-radkowski/TrackingExpertPlus

Senior Design Project - Memworld

|

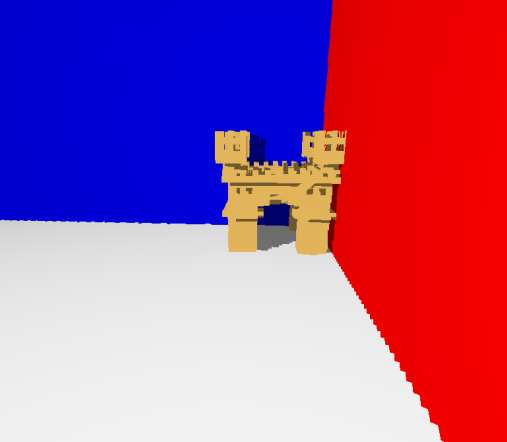

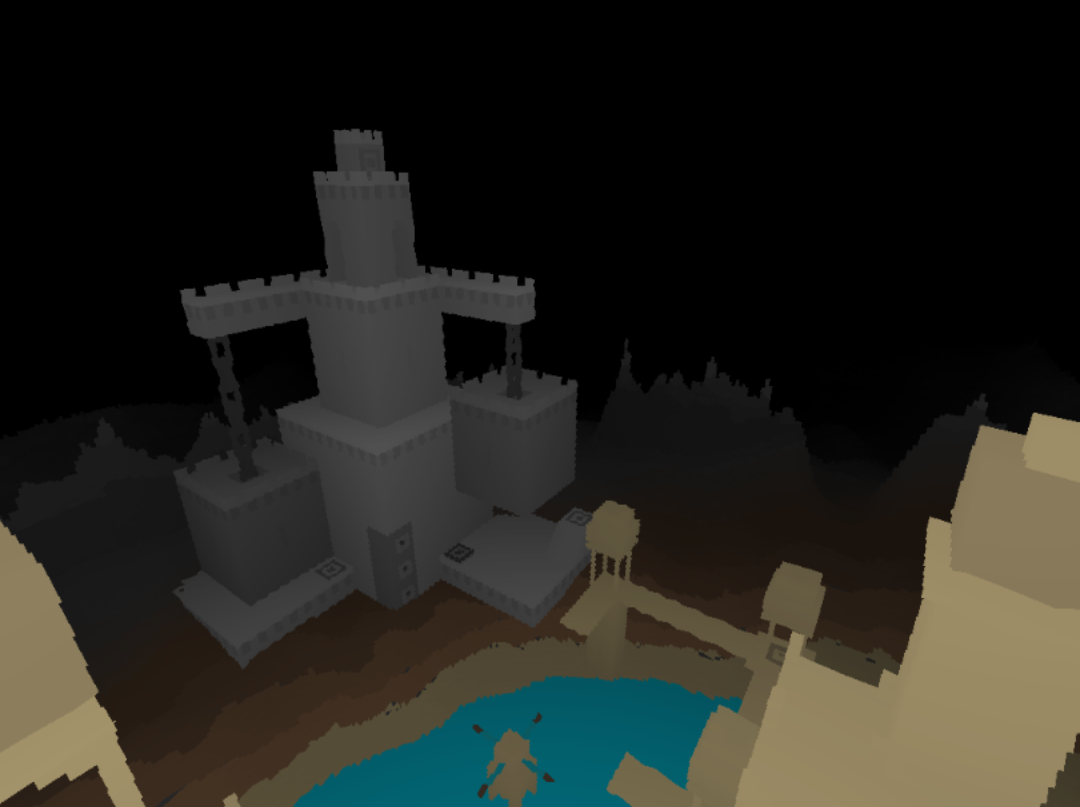

As a capstone project for my undergraduate degree, I joined a team of other software, electrical, and computer engineers to help one of the university's professors develop a raycasting engine called Memworld. The main idea of this engine is to render a 3D world using data stored directly in memory as an array of voxels. This could have a variety of benefits, including simplified collision detection algorithms and quick visual representations of current memory storage. The code for the base engine was already created, but it only functioned on the CPU which caused severe engine slowdown when the image being rendered reached a certain resolution. Our goal is to use GPGPU libraries to parallelize the rendering of the environment, eliminating this slowdown. We are then to create a simple game with the application to demonstrate its ability to function in a practical environment.

After researching what we should use to accomplish our goal, we had decided the OpenCL library, an open-source library that allows for the development of applications that run across different types of computer processors, was our best choice. We had considered libraries such as NVIDIA's CUDA, but had decided against it as that would prioritize computers with NVIDIA graphics cards when the intention was to allow any computer to run the program. With this library, we were able to easily parallelize the engine by using one thread on the GPU for every pixel being shown on the renderer's viewport. We found a simple and effective way to load voxelized models into the environment, created some simple movement controls, and researched how we would be able to further improve the rendering engine using optimized algorithms and what implementing a basic physics engine would entail. We developed a point of contact with our client for ease of communication and established regular client and team meetings for formal updates on our progress. This is also done through our use of the workflow available to us on the GitLab website. We presented our initial design to the client in May of 2022 and our final design and test application to an industry panel in December of the same year. For more information, check out our team website here! sddec22-18.sd.ece.iastate.edu/ |

|

Therapy Dog CommunicatorThis project was a product of my CPRE 186 course for team building and portfolio development. I worked on a team of three people (including myself) to create a phone application that would help people communicate with their therapy dogs. There are people who are given therapy dogs because they have a hard time communicating with other people. Unfortunately, some of those people still have trouble communicating to their pets. We created this Therapy Dog Communicator app in order to make these interactions easier.

Our end product was a list of buttons that play a sound bite when they are tapped. Each of these sound bites are different commands that the user may ask their therapy dog to perform. Since the names of dogs vary immensely and there are some commands that might be unique to different people, we included a feature that would change the dog's name and the name of 8 custom buttons to any word within the phone's text-to-speech capability the user would like. For example, if the user would like their therapy dog to respond to the number "eight," in the options menu, the user can change the name of one of their custom buttons to eight, and that button would be created. |

|

After deciding that we would create our app for the Google Play Store, as we all had knowledge in using Java, we found the problem of using strings across all of its screens. In Android app development, there is a table of strings that the program gets text elements from. These can be used for titles, text box descriptions, and other phrases in the application that can't be changed by the user. For some strings, however, we needed the user's input to change their values, particularly for the custom buttons in the program. Most of my contribution to the project was done looking through the documentation of Android to find a proper solution. What I found was an object called SharedPreferences whose separate instances are public to an entire project. The object stores different variables assigned to variable names and allows those values to be used and changed throughout the application. Using this, we were able to get our custom buttons and text-to-speech functions finalized.

The Therapy Dog Communicator project was a great exercise in teamwork and professionalism for me. I had to put trust into a team of other people to get a project finished before a given deadline with enough care so that the project was in full functioning order. In addition, I obtained a sense of how application development works and how important it is to be aware of the documentation I am working with, both before and during work on a project.

SeaSalt

In the Fall semester of 2020, I worked on a project with a group of other software development majors called SeaSalt. The goal was to create a mobile application that would aid students in keeping track of their assignments staying in touch with the rest of the course. This would be done by creating message boards for each course that would allow students and professors to communicate among each other, along with course schedules that would be automatically added to a student's cumulative course calendar that works as a sort of assignment notebook. In other words, it would be an app that combines and expands on the concepts of the Canvas and Discord programs. Though this was part of a class project, we believed there was an apparent need for the creation of this app. This was the semester immediately following the COVID-19 outbreak, and given the fact that Canvas was slightly outdated to be used for a teaching environment that presided completely online, we took it upon ourselves to create a program that would replace the current system with a new and improved one.

We all knew how to program in Java relatively adeptly, so since Android apps use Java for development, we all figured writing an Android application as an initial version was the best course of action. Since the app relied heavily on internet connection and data storage, our main challenge was to set up a database system that could be accessed and edited by multiple instances at the same time. This would be required for storing a range of data that included (but was not limited to) usernames and passwords, message board histories, assignment dates, and student and professor profiles. In order to communicate with these databases, we integrated the Websockets and Volley libraries into our backend via Gradle. In addition, we had to develop an understanding of Android Studio's UI/UX system to create an interface that would be intuitive and satisfying for any user to interact with. In order to streamline project management, we used features presented to us in Git and GitLab such as flow control and issues.

By the end of the project, we had created a minimum viable product for the application. This consisted of a sign-up and sign-in screen, a professor and classroom directory, and an accessible messaging board. This would all connect to a database that would store the data until it is later needed. At the time, if we grew out the project a bit more, we would have an app that could feasibly be used by our university in its current state. However, these days similar features have been implemented in Discord to the point where many classes use that platform for a purpose similar to that of SeaSalt. Regardless of its relevance and usefulness now, it is satisfying to know that we were on the right track with this project's development, and it was a great experience to learn about database development and high-level network communication in mobile applications.

The code for our project can be found on my teammate's repository, where he has backed up our project files:

github.com/AJGKooK/SeaSalt

We all knew how to program in Java relatively adeptly, so since Android apps use Java for development, we all figured writing an Android application as an initial version was the best course of action. Since the app relied heavily on internet connection and data storage, our main challenge was to set up a database system that could be accessed and edited by multiple instances at the same time. This would be required for storing a range of data that included (but was not limited to) usernames and passwords, message board histories, assignment dates, and student and professor profiles. In order to communicate with these databases, we integrated the Websockets and Volley libraries into our backend via Gradle. In addition, we had to develop an understanding of Android Studio's UI/UX system to create an interface that would be intuitive and satisfying for any user to interact with. In order to streamline project management, we used features presented to us in Git and GitLab such as flow control and issues.

By the end of the project, we had created a minimum viable product for the application. This consisted of a sign-up and sign-in screen, a professor and classroom directory, and an accessible messaging board. This would all connect to a database that would store the data until it is later needed. At the time, if we grew out the project a bit more, we would have an app that could feasibly be used by our university in its current state. However, these days similar features have been implemented in Discord to the point where many classes use that platform for a purpose similar to that of SeaSalt. Regardless of its relevance and usefulness now, it is satisfying to know that we were on the right track with this project's development, and it was a great experience to learn about database development and high-level network communication in mobile applications.

The code for our project can be found on my teammate's repository, where he has backed up our project files:

github.com/AJGKooK/SeaSalt

Handwriting Analysis Application

Today, we have developed applications that are able to compare handwritten documents to each other to determine whether they had been written by the same person or not. This is useful for finding suspects in forensic science and analyzing documents in historical research. However, there is not a way that these programs can show particularly why two handwriting samples are similar to each other, as they can only observe the general similarities between them as opposed to the specific attributes of the samples such as boldness, slantedness, etc. The implementation of an algorithm that could compare these attributes poses a major problem due to the high objectivity of visual qualities of any given image.

I teamed up with a group of my peers to take on this challenge at the beginning of 2022. Variational auto-encoders (VAE) are machine learning models that encode varied features of input data without supervision from a user. There is a version of this called a Beta-VAE that can generate a multitude of variables from one model, each based on a different feature of the input data. In generating a model that outputs proper values based on observable and apparent differences in certain qualities, we will be able to create an application that can parse and compare features from samples of handwriting. This would give the user the ability to describe in human speech the specific differences between multiple pieces of handwriting, which is immensely useful in a court of law where simply stating that "sample x is 80% similar to sample y" will not truly convince a jury of any present similarity.

In the end, our project went unfinished. For our level of understanding of the subject in combination with the time restraints we had, my group was not able to reach our goal within the given deadline. However, in our venture into this subject, we were able to explore a practical application of Beta-VAEs at the implementation level. This improved our understanding of the subject and ultimately made the experience of attempting this feat a rewarding one.

I teamed up with a group of my peers to take on this challenge at the beginning of 2022. Variational auto-encoders (VAE) are machine learning models that encode varied features of input data without supervision from a user. There is a version of this called a Beta-VAE that can generate a multitude of variables from one model, each based on a different feature of the input data. In generating a model that outputs proper values based on observable and apparent differences in certain qualities, we will be able to create an application that can parse and compare features from samples of handwriting. This would give the user the ability to describe in human speech the specific differences between multiple pieces of handwriting, which is immensely useful in a court of law where simply stating that "sample x is 80% similar to sample y" will not truly convince a jury of any present similarity.

In the end, our project went unfinished. For our level of understanding of the subject in combination with the time restraints we had, my group was not able to reach our goal within the given deadline. However, in our venture into this subject, we were able to explore a practical application of Beta-VAEs at the implementation level. This improved our understanding of the subject and ultimately made the experience of attempting this feat a rewarding one.

3D Design

I took a 3D modelling course at my university as a technical elective for my major. Though it's mostly a recreational interest of mine, I do believe it's important to include some of the works I'd done while taking the course to showcase my capabilities as a 3D designer as well as a software developer in the case those searching find the skill relevant. The work currently shown in this section is all done in Maxon Cinema4D R26, though I hope to translate those skills to Blender when given the chance.